OpenStreetMap mapping using a car dashcam

I have been an OpenStreetMap mapper for almost four years, mostly sticking to the traditional methods of mapping:

- armchair mapping using JOSM and one of the usual task givers (MapRoulette and HOT)

- outdoor mapping using StreetComplete, Every Door and Vespucci

However, a little over a year and a half ago, I started using a different strategy.

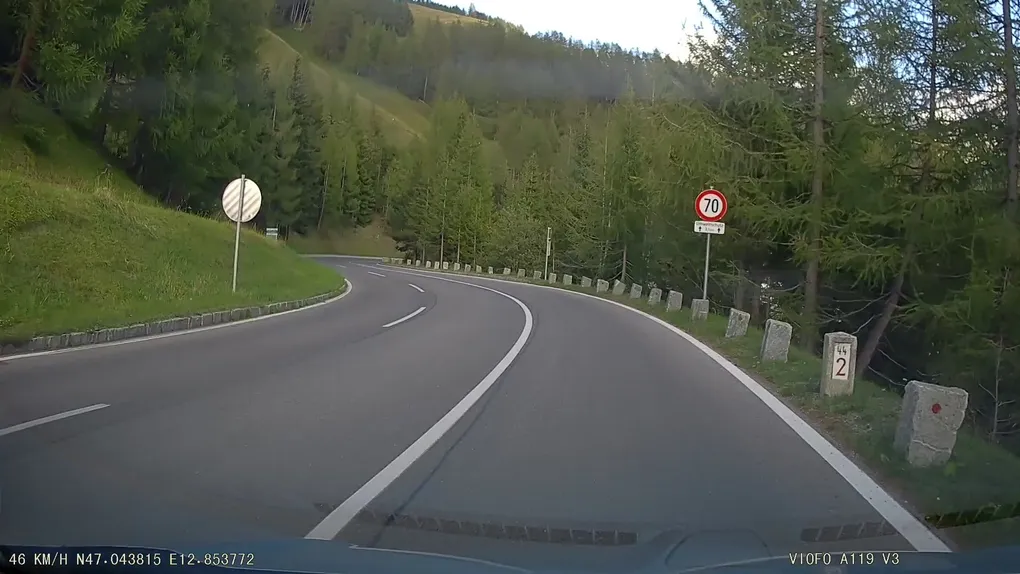

I bought a dashcam for my car, and it changed things. I mainly wanted it to help me review how I react in tricky situations and improve my driving skills. After watching some of the clips, however, I realized that it could be extremely useful for mapping on OpenStreetMap.

Evolution of my workflow

For the first iteration, I simply watched the videos and used the displayed coordinates at the bottom to identify where to make my edits.

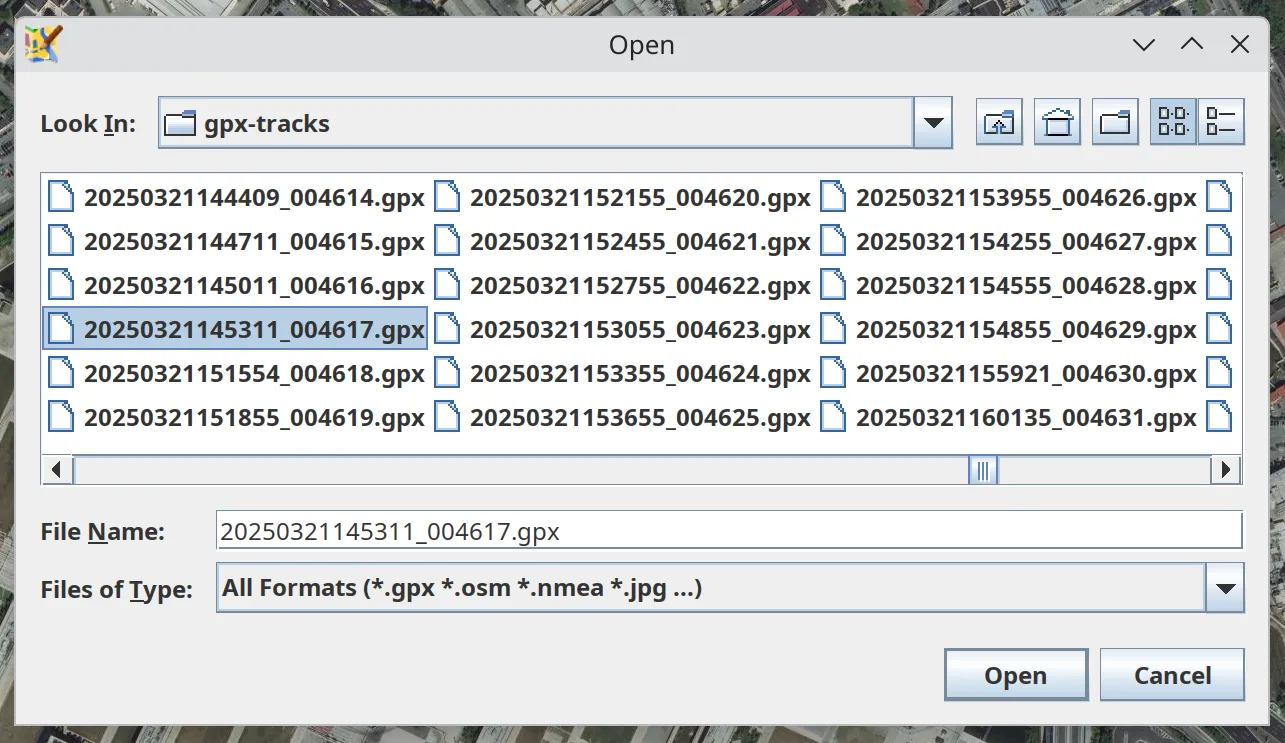

However, after some time, I grew tired of this manual labour. Despite being a software developer, I took the lazy way out and searched for a ready-made solution for extracting GPS coordinates from a dashcam. Fortunately, the dashcam I bought is straightforward to use, and there was a ready-made Python script for extracting GPX data with various updates over the years.

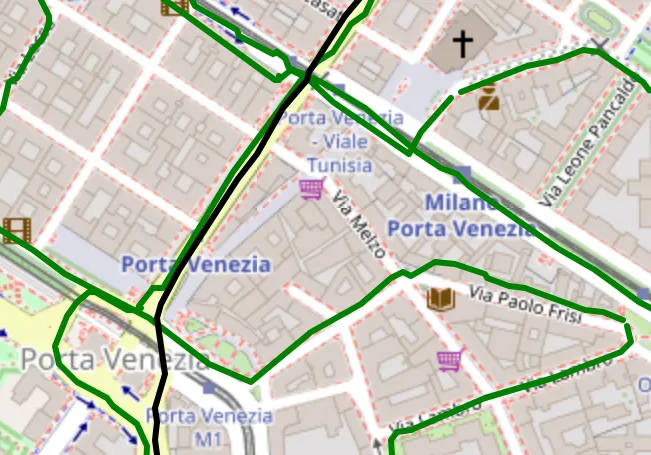

I could then import the extracted GPX data into JOSM and work on each video, knowing exactly where I was:

This simplified the workflow considerably, and I was happy with this solution for a few months.

Software Developer Mentality

Although everything was working fine, processing each clip still required some manual labour. This involved double-clicking the video files, opening the GPX file in JOSM, and keeping track of the processed videos.

Ultimately, as a software developer, I have the ability to create and automate whatever I want, so that’s what I did.

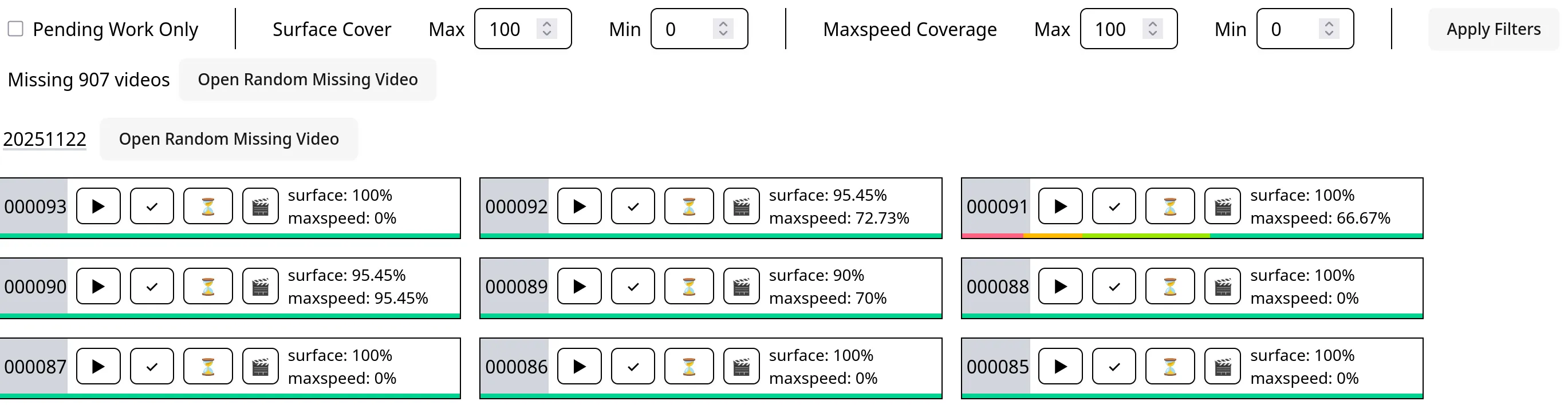

I started with an application to keep track of the processed videos. I wanted to try an ‘Electron alternative’ called Tauri, so I seized the opportunity to start this project with it.

The UI was a simple list of colour-coded blocks, with a button to toggle the video’s state between ‘to do’, ‘work in progress’ and ‘done’.

Although this simplified my workflow, I was still not satisfied.

In the next iteration, I added new capabilities by leveraging Tauri’s ability to interact directly with the terminal, open files, and call API endpoints. With a single click of the ‘open’ button, I was able to:

- open the video file in VLC

- use the JOSM’s remote control to open the corresponding GPX file and load the Bing imagery, if not already loaded

- mark the video as ‘work in progress’

This greatly simplified the workflow, reducing the required manual labour to just two actions:

- click the ‘open’ button

- download the OSM data along the loaded GPX track

After these essentials, I added additional features that would be nice to have, such as:

- a filter to show only videos with a ‘to do’ status

- a counter showing the number of missing videos

- a random button to open a random ‘to do’ video

And that was it. Or maybe not…

AI everywhere and Work In Progress

Everything was fine until I found myself with some free time and access to a lot of LLMs, both local and remote. Instead of relaxing, I decided to try and improve the existing tool to help me identify the most interesting clips before processing them.

The main idea was to identify the most interesting aspects of each video based on the GPX track. Ultimately, I decided to focus on maximum speed and surface coverage, as well as keeping track of each clip’s overlap with past clips.

First, I migrated the entire codebase to a fastapi backend and vite + react frontend to simplify my own work and that of the LLMs. After that, I created lots of small scripts and tools to handle the processing of data:

- download geofabrik extracts

- process the extract with osmium

- import the processed data into postgis

- process each video to generate GPX and GeoJSON tracks

- use the postgis data in order to determine maxspeed and surface coverage

- calculate the overlap with past clips

All the generated data is visualised in the tool’s front end to prioritise or filter the videos.

The Before Driving Phase

Thanks to this set of tools, I could quickly map each area that I visited. The only thing missing was an automated way of identifying roads that I had not visited.

First, I created a simple web application that plots all the GPX files on a map. This gave me an idea of the places I had covered and helped me plan my routes. When planning a journey from A to B, I avoided places I had already visited and planned to travel through areas I had never seen before.

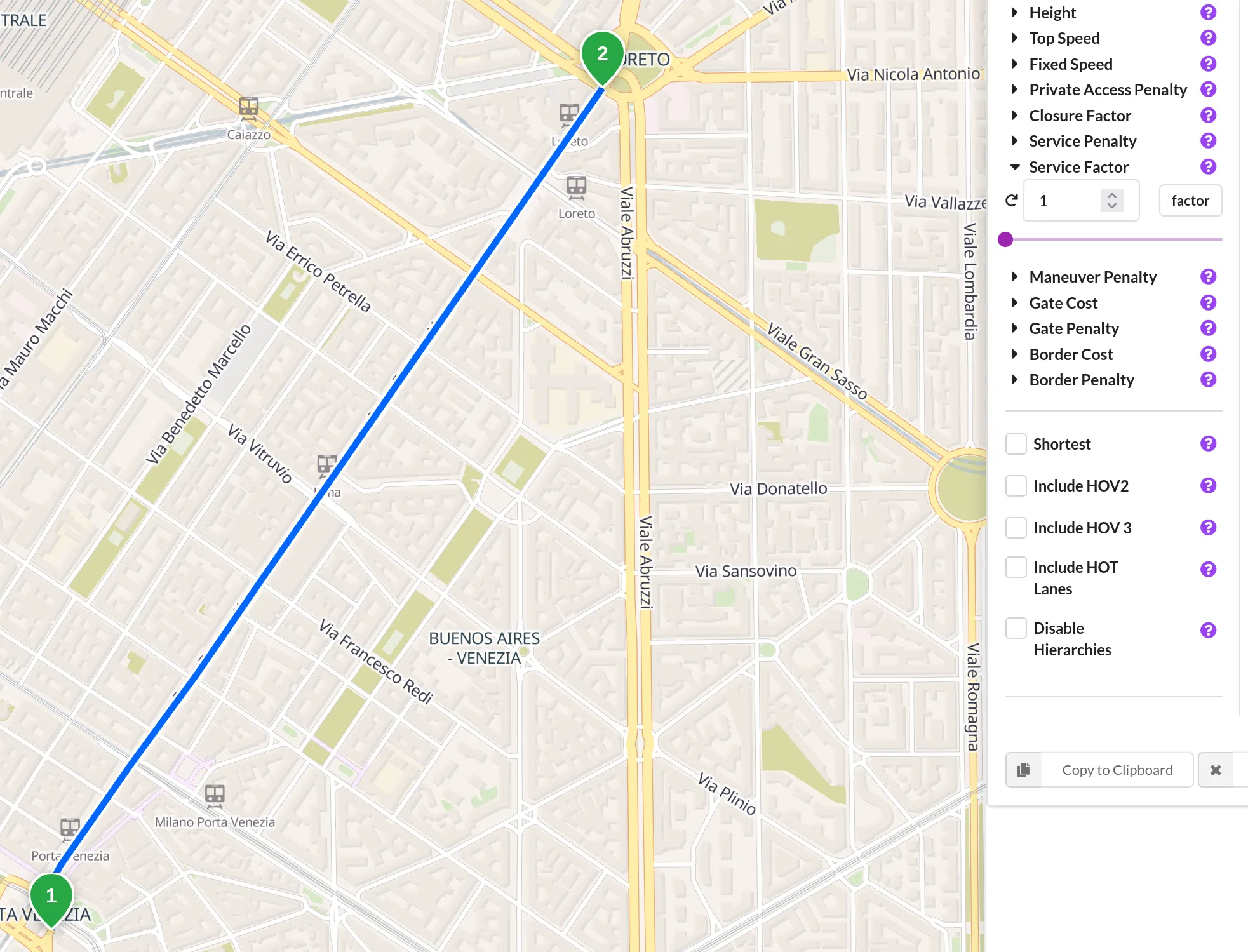

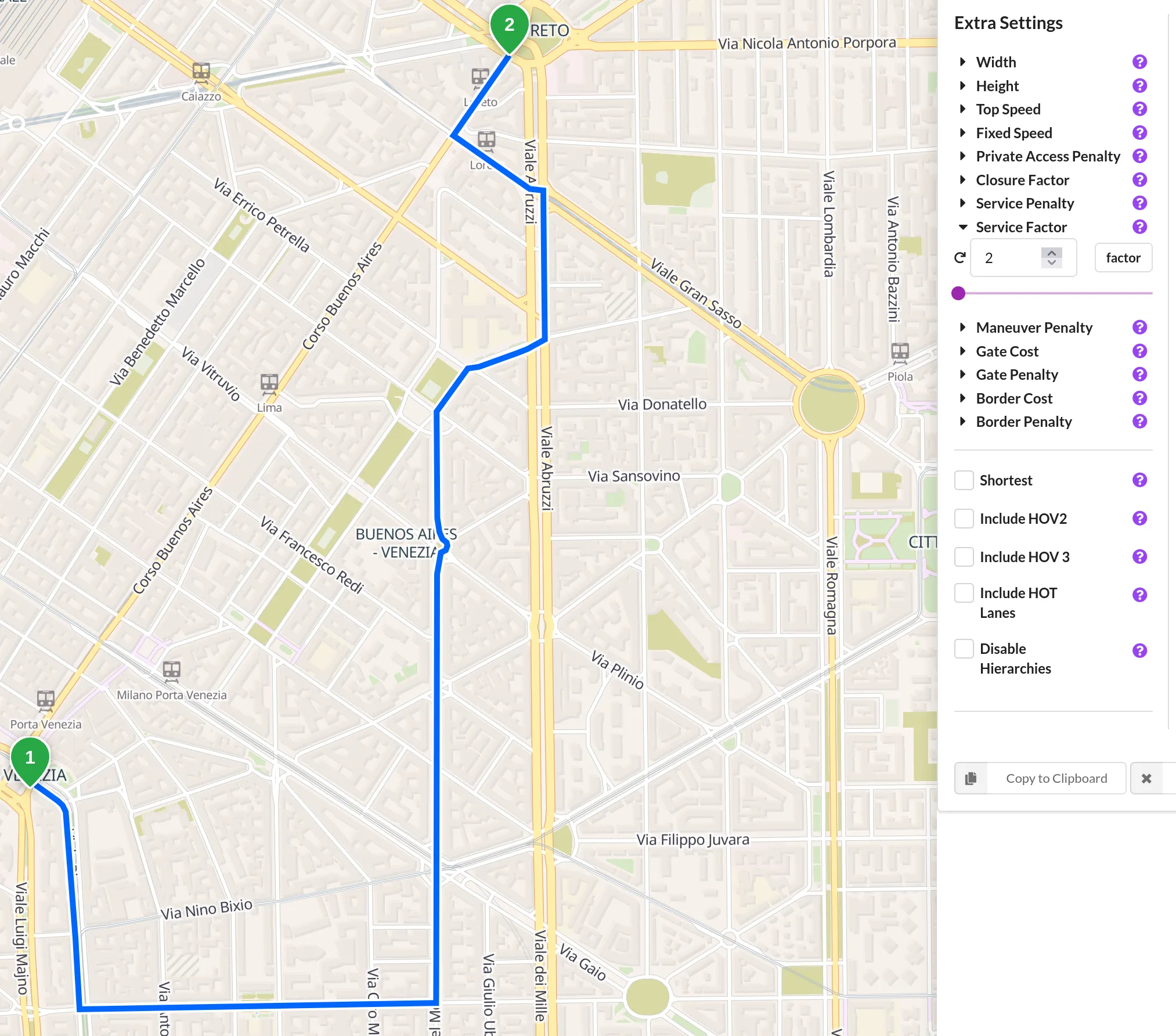

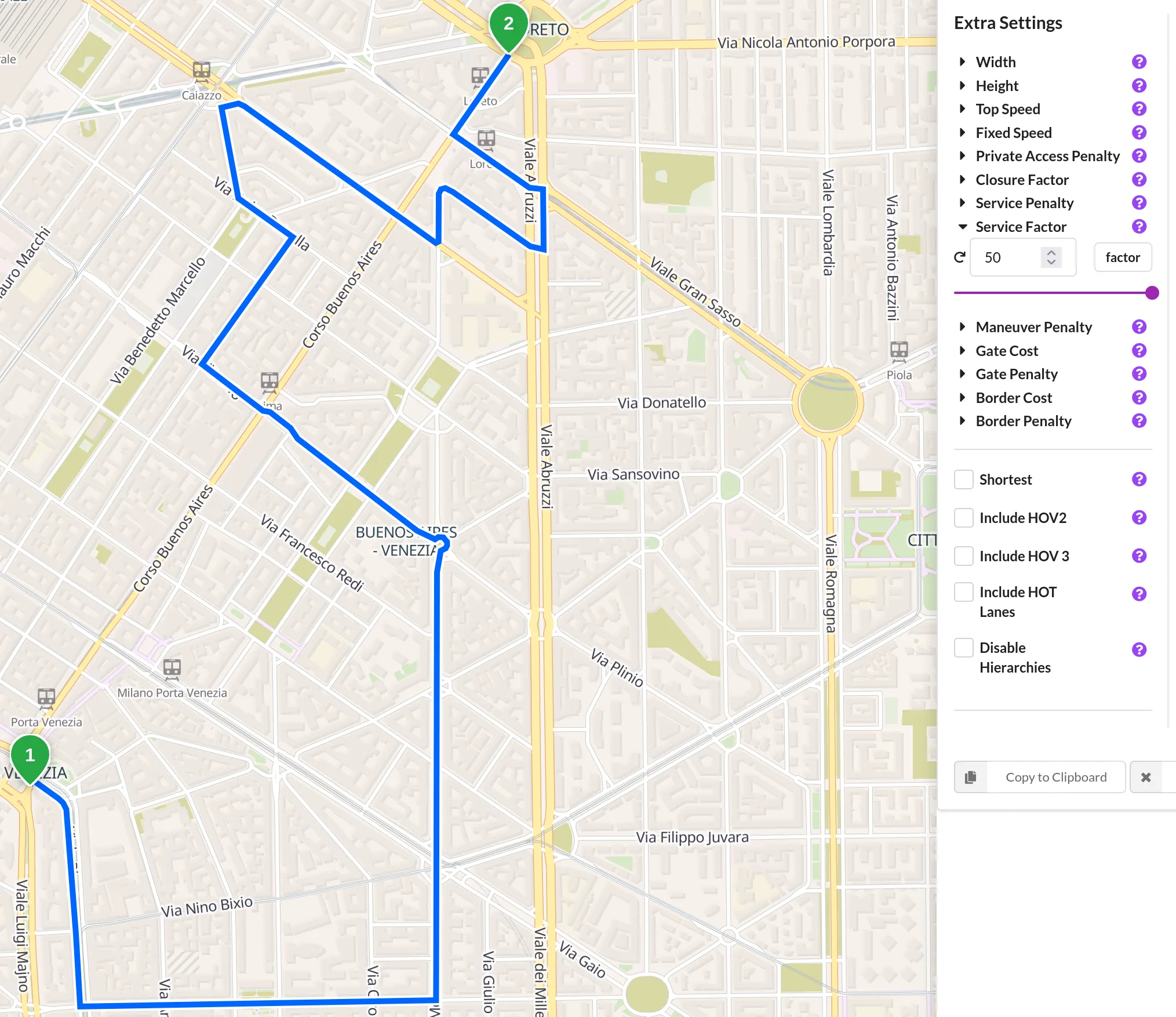

The next step was to create something that, given a route from A to B, would provide an optimised route striking a good balance between time and new discoveries.

To achieve this, I used the trace_attributes Valhalla API endpoint to get a list of the OpenStreetMap ways that I had already visited. Armed with this list, I updated the processed Geofabrik extracts by changing the properties of these ways:

maxspeedto “10” (Km/h)highwayto “service”

I can then use Valhalla directly with this distorted view of the world. By adjusting the ‘service factor’, I can force it to avoid more or fewer of the roads I’ve already travelled on, while still maintaining some degree of path optimisation.

Conclusions

Using a car dashcam for OpenStreetMap work was a small change that had a significant impact. The process of manually reading coordinates from videos evolved into an automated, repeatable pipeline leveraging simple tools such as GPX extraction and JOSM, and later more advanced tools including a Tauri helper app, a FastAPI/Vite/React web application, and spatial processing with PostGIS. The result is faster editing, better prioritisation, and improved route planning. I can now identify clips offering the most new coverage, locate missing speed limit segments requiring attention and prevent the same sections of road from being mapped again.